Every piece of information that reaches you arrived through someone or something: a person reported it, a system collected it, a platform published it, a database stored it. That source carries its own history of accuracy, access, bias, and potential compromise. Before you integrate the information into an assessment, pass it to a colleague, or brief it to a decision-maker, you need to answer two separate questions. First, how much do I trust the source that provided this? Second, how much do I trust the specific information it provided this time?

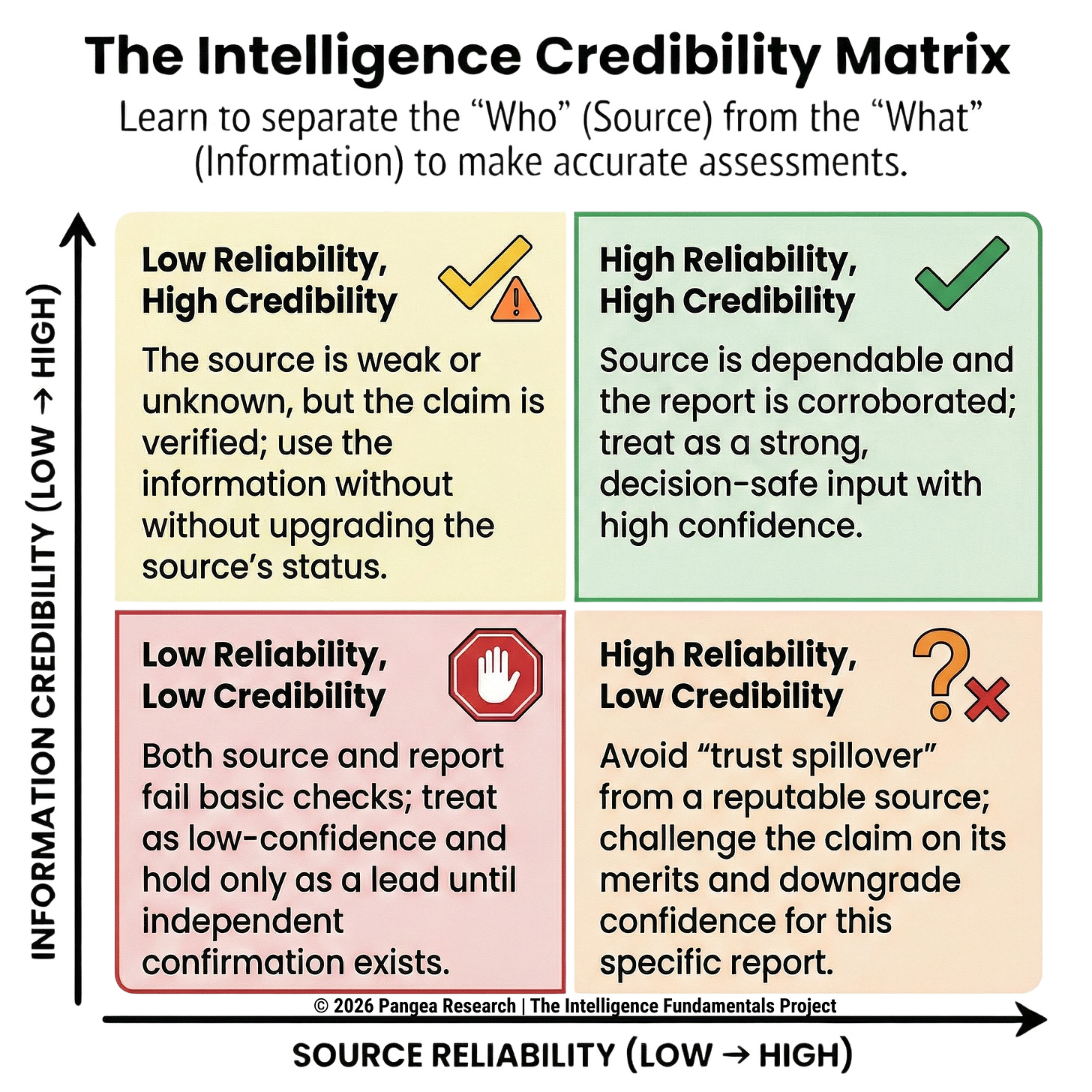

Those two questions need separate answers because a source’s track record doesn’t tell you whether this specific report is accurate. A source with a ten-year history of reliable reporting can still be wrong today. If your opinion of the source automatically becomes your opinion of the information, you’ve built a bias into every assessment you produce, and you won’t see it because it will feel like good judgment. Source reliability and information credibility have to be assessed independently to prevent that (ATP 2-22.9 2012).

How Formal Evaluation Works

Formal evaluation systems score these two judgments independently. The source gets one rating, based on its track record, expertise, access, and trustworthiness. The information gets a separate rating, based on its logical consistency, plausibility, and corroboration status. Those two ratings combine into a single evaluation code attached to the information, so that anyone who encounters it later can see both dimensions at a glance. British military intelligence doctrine defines evaluation as “an appraisal of the quality of the reported information, which is key to determining the reliability of the originator or source and the credibility of the information” (JDP 2-00 2011). Reliability belongs to the source. Credibility belongs to the information. Both must be assessed.

When a due diligence investigator delivers findings about a potential business partner, the client needs to understand where the findings rest on solid ground (multiple independent sources, verified primary records) and where they depend on a single source that hasn’t been independently checked. Collapsing both dimensions into a single “trustworthy” or “untrustworthy” call destroys the reader’s ability to see where your assessment is strong and where it’s fragile.

Source Reliability: What It Depends On

Source reliability is built over time. For human sources (a confidential informant, an industry contact, a subject-matter expert, a liaison partner), reliability depends on three elements: their motivation for reporting, their expertise in the subject they’re reporting on, and their actual access to the information they claim to have (JDP 2-00 2011). Each of these can be evaluated, and each can change.

Why Motivation Matters

The motivation behind a source’s reporting shapes what they report and how they frame it. An informant cooperating with law enforcement to reduce a criminal charge has an incentive to provide information the handler wants to hear, whether or not it’s accurate. A corporate insider who genuinely believes their company is committing fraud may interpret ambiguous evidence through the lens of that belief, selectively emphasizing facts that support their conclusion and downplaying facts that don’t. A private investigator cultivating sources during a background investigation sees the same pattern: a former colleague with a personal grudge against the subject will frame neutral facts negatively, while a close friend will omit or soften genuinely concerning information. Understanding motivation tells you where to probe and what to corroborate.

Expertise and Its Limits

A source reporting outside their area of knowledge is functionally a different source. An IT manager at a manufacturing company might be an excellent source on the company’s network infrastructure and a terrible source on the company’s financial health. The source may be completely honest and still give you bad information on a topic outside their expertise. The IT manager doesn’t have the training to interpret financial indicators accurately, and they may not recognize that they’re out of their depth. A corporate intelligence analyst interviewing a former employee of a competitor faces a related issue: the source’s knowledge is frozen at the point they left the company, and everything they report about current operations is inference, not observation. Reliability ratings should ideally be topic-specific, though most formal systems don’t make that distinction explicit.

Placement: Where Your Source Sits

Placement refers to where a source is positioned relative to the information you need: their role in an organization, their location in a network, their proximity to decision-makers or events. A confidential informant embedded in a drug trafficking organization has placement that a patrol officer surveilling the same organization from outside does not. A corporate insider working in the finance department of a company under investigation has placement that gives them direct exposure to financial records, internal communications, and executive decisions about revenue reporting.

Placement is what makes a source worth developing in the first place, and it’s also what determines the boundaries of what they can report on. A source with strong placement in one part of an organization may have no placement at all in another. A mid-level engineer at a defense contractor might have excellent placement on technical programs they work on directly, but no placement when it comes to the company’s bid strategy or executive compensation. A private investigator working a fraud case might cultivate a source inside the target’s accounting department; that source has strong placement on financial transactions but no placement on what the CEO discussed in a private meeting with outside counsel. The reliability of any specific report depends partly on whether the source’s placement actually puts them in a position to know what they’re claiming to know.

Access: What Your Source Can Actually See

Placement and access are related but distinct. Placement is where the source sits; access is what information that position lets them reach. A source can have strong placement (they work at the target company) but limited access (they’re in a regional office with no visibility into headquarters decisions). A source who claims firsthand knowledge of a company’s board-level decisions but actually works three levels below the boardroom has an access problem that no amount of honesty or expertise can fix. They’re reporting what they’ve heard, not what they’ve seen, and the information has already been filtered, compressed, and potentially distorted before it reaches them. A private investigator cultivating a source inside a target organization needs to understand precisely what that source can and can’t see from their position. The question that matters is whether the source has actual access to the specific information they’re reporting.

How Reporting History Builds (and Erodes) Trust

Reliability assessment for human sources depends heavily on what has happened before: how accurate has this source been, and how well has their previous reporting held up when checked against other information? (JDP 2-00 2011). That history needs continuous review. A source who was reliable last year may have changed jobs, lost access, acquired new financial pressures, or come under the influence of someone with an interest in shaping their reporting. In law enforcement, discovering that a long-trusted informant has been providing fabricated intelligence to maintain their status and payments is a known failure mode that periodic reevaluation is designed to catch. In corporate intelligence, a previously reliable industry contact who has changed employers may now have a competitive incentive to share misleading information about their former company, even if they were scrupulously honest in prior interactions.

Technical Sources: Reliability Beyond People

How Technical Reliability Works

Reliability for a technical source (surveillance cameras, monitoring platforms, geolocation tools, open-source scraping systems, signals collection equipment) depends on whether the system is functioning within its design parameters, or whether age, damage, environmental interference, or configuration error has degraded its output (JDP 2-00 2011). A corporate security team relying on social media monitoring tools needs to understand how those tools handle non-English text, slang, sarcasm, satire, or images with embedded text. An OSINT analyst using a web scraping tool needs to know whether the tool is capturing everything on a target site or only what the site’s structure allows it to see.

When Technical Sources Get Manipulated

Technical sources face the added risk of deliberate manipulation. A geolocation tool relying on IP addresses can be fooled by VPNs and proxy servers. A social media monitoring platform can be overwhelmed by coordinated inauthentic behavior, reporting bot activity as if it were genuine public sentiment. British military doctrine flags the risk that “all collection activities are susceptible to the risk of detection by an adversary’s counter-intelligence measures and, consequently, deception as well as denial” (JDP 2-00 2011). A corporate security team monitoring social media for brand threats can be misled by a coordinated disinformation campaign designed to look like organic public anger, and without corroboration from other sources, the team has no way to distinguish the campaign from a genuine shift in public opinion. Any technical collection system that someone else can observe, they can potentially manipulate.

Information Credibility: Evaluating What You Were Told

Credibility is assessed separately from the source, based on the quality of the information itself. British military doctrine identifies two approaches: internal assessment and external assessment (JDP 2-00 2011).

Testing Information on Its Own Terms

Internal assessment examines the information without reference to anything outside it. Is the content factually plausible? Is it internally consistent, or does it contradict itself? Does it contain claims that are verifiably wrong? A report claiming a meeting took place at a location that was closed on the date in question fails on its face. A source who provides a detailed account of a conversation but gets the date, location, or participants wrong has an internal credibility problem regardless of how reliable you consider the source.

Corroboration and the Independence Trap

External assessment compares the information against other reporting on the same subject to check whether it aligns with or contradicts what other sources are saying. External assessment draws on a wider evidence base, but the information you’re comparing against might itself be wrong, biased, or sourced from the same origin.

Corroboration (confirming information through independent sources) is the gold standard for credibility assessment, but the key word is “independent.” Five news articles that all trace back to the same anonymous leak aren’t five pieces of evidence; they’re one piece of evidence republished five times. Circular reporting, where the same original information cycles through multiple channels until it appears to have independent confirmation, is one of the most common analytical traps across every discipline, from intelligence analysis to journalism to financial due diligence (JDP 2-00 2011). A corporate intelligence analyst who finds the same market rumor in three different trade publications needs to check whether all three sourced the same unnamed executive before treating the rumor as corroborated. Genuine corroboration requires that the confirming sources had independent access to the underlying facts, and verifying independence requires work that many analytical workflows don’t build in.

When New Information Contradicts What You Already Believe

Contradictory information still needs the same evaluation rigor as information that supports your existing assessment. British military doctrine addresses this directly: contradictory information must be evaluated on its own terms (JDP 2-00 2011). The contradicting report might be correct and the established assessment might be wrong. Both might contain partial truths that need to be reconciled rather than one winning and the other being discarded.

A corporate intelligence analyst whose competitive assessment has been stable for months needs to take seriously a report suggesting a competitor has changed strategy, rather than defaulting to the assumption that the competitor is still doing what they were doing last quarter. The same applies in due diligence, law enforcement case-building, and any other setting where an analyst has invested time and credibility in an existing conclusion.

Working with Unknown Sources

When you receive information from a source you’ve never worked with before, you have no basis for judging reliability. Formal grading systems handle this by assigning a default “cannot be judged” rating to new sources (ATP 2-22.9 2012). An F rating (reliability cannot be judged) “does not mean the source is unreliable, but OSINT personnel have no previous experience with the source upon which to base a determination” (ATP 2-22.9 2012). “Don’t know” is fundamentally different from “don’t trust.”

A first-time source providing information you can’t corroborate receives the lowest credibility rating (”cannot be judged”) for the same reason: you have no external basis for confirming or denying it. A private investigator who gets a cold call from someone offering information about a subject under investigation faces exactly this situation. The caller might be providing accurate, firsthand intelligence that will check out completely, or they might be a friend of the subject trying to feed the investigation false leads. The “cannot be judged” ratings on both axes force you to evaluate the information on its own internal merits: its logical consistency, its specificity, its alignment with whatever else is already known. You rely on those rather than on assumptions about the source based on first impressions, tone of voice, or apparent confidence.

How Ratings Change Over Time

As a source’s reporting history develops, the reliability rating moves up or down based on evidence. Each piece of information the source provides that turns out to be accurate strengthens the reliability assessment. Each piece that turns out to be wrong, exaggerated, or fabricated weakens it. Every practitioner does this informally; the formal system’s advantage is that it makes the assessment explicit, documented, and transferable. When a law enforcement analyst retires or transfers, the reliability assessments they’ve built up over years of working with specific informants don’t disappear; they’re recorded in the system for their successor to use. When a corporate intelligence team brings on a new analyst, the team’s documented source assessments give the new hire a foundation that would otherwise take years to build.

Where the System Breaks Down

Anchoring on First Impressions

An initial reliability assessment tends to become resistant to change even as new evidence accumulates. A source rated A (”completely reliable”) who begins providing subtly inaccurate information may continue to receive the benefit of the doubt long after the evidence should have triggered a downgrade. Your prior confidence creates a mental threshold that new negative evidence has to overcome, and subtle inaccuracies rarely feel dramatic enough to clear it. The same works in reverse: a law enforcement analyst who flagged an informant as unreliable after one bad report may miss months of good intelligence from that same informant because the initial rating stuck. Periodic, systematic reevaluation (conducted on a regular schedule, not triggered by individual reports) is the countermeasure, but it only works if you approach it willing to revise your prior judgment based on what the evidence actually shows.

False Corroboration

Treating multiple reports as independent corroboration without verifying that they actually come from independent sources is difficult to catch because the reports look different on the surface. Three news articles citing the same unnamed official aren’t corroboration; they’re one source republished three times. A due diligence investigator who finds the same derogatory information about a subject in three different commercial databases needs to check whether all three databases pulled from the same original public record before treating it as confirmed through multiple channels. Genuine corroboration requires that the confirming sources had independent access to the underlying facts, and verifying independence requires work that many analytical workflows don’t build in.

Letting Source Trust Inflate Information Ratings

When an A-rated source provides information rated 1 (”confirmed”), the system is working correctly. When an A-rated source provides information that should be rated 4 (”cannot be judged”) but gets rated 2 (”probably true”) because “this source is usually right,” the system has broken down. Maintaining separate axes is supposed to prevent that spillover, but it requires active discipline when your trust in the source creates a pull toward trusting the information. The longer you’ve worked with a source and the stronger their track record, the harder it becomes to rate a specific piece of their information as unconfirmed when the evidence warrants it.

What Your Decision-Maker Needs

The person who acts on your assessment (a corporate executive, a police commander, a client, a prosecutor, a general counsel) needs to know where your assessment is built on solid ground and where it’s fragile, because their decisions carry consequences that your analytical judgment alone can’t absorb. A detective presenting a case to a prosecutor needs to distinguish between evidence corroborated through forensic analysis and evidence resting on a single informant’s claim. A private investigator reporting to a law firm needs to flag which background check findings are verified through primary records and which come from databases with known gaps.

When your reader can see the source rating and the information rating separately, they know which claims are confirmed through well-established sources and which depend on a single untested source reporting something you couldn’t corroborate. That’s what lets them make decisions proportional to the evidence rather than treating your entire assessment as equally strong or equally uncertain. Every grading system covered in the remaining articles of this series is a different implementation of this same foundational principle: rate the source, rate the information, keep the two ratings separate, and make both visible.

References

ATP 2-22.9. 2012. Open-Source Intelligence. Department of the Army.

JDP 2-00. 2011. Joint Doctrine Publication 2-00: Understanding and Intelligence Support to Joint Operations. 4th Edition. UK Ministry of Defence.

Originally published on Substack

View on Substack →