The 4x4 system is a compressed evaluation framework that rates the source of information on a four-point letter scale and the information itself on a four-point number scale. The United Nations Office on Drugs and Crime (UNODC) describes it as “widely accepted as common practice for law enforcement agencies” and identifies it as one of two evaluation systems in broadest international use (UNODC 2011). The Organization for Security and Co-operation in Europe (OSCE) confirms this in its guidebook on intelligence-led policing, presenting the 4x4 alongside the 5x5x5 as the most common evaluation frameworks in policing contexts internationally (OSCE 2017). Europol adopted the 4x4 as its standard for evaluating intelligence exchanged between EU member states, and the system is codified in Article 29 of the Europol Regulation (Council of the European Union 2016). Outside Europe, UNODC capacity-building programs have trained police services across the Balkans, Central Asia, and parts of Africa and Latin America on the 4x4, making it one of the most geographically widespread evaluation tools in law enforcement.

The system’s value is speed and consistency. A smaller matrix is faster to apply, easier to train on, and more likely to be used reliably by personnel who aren’t career intelligence analysts. A police analyst in a country receiving OSCE or UNODC training can learn the 4x4 in a single session and apply it consistently from day one. A private security firm operating across multiple jurisdictions with varying levels of analytical sophistication might adopt the 4x4 as a common baseline precisely because it’s simple enough that everyone can use it the same way, even if it means accepting less granularity in the ratings.

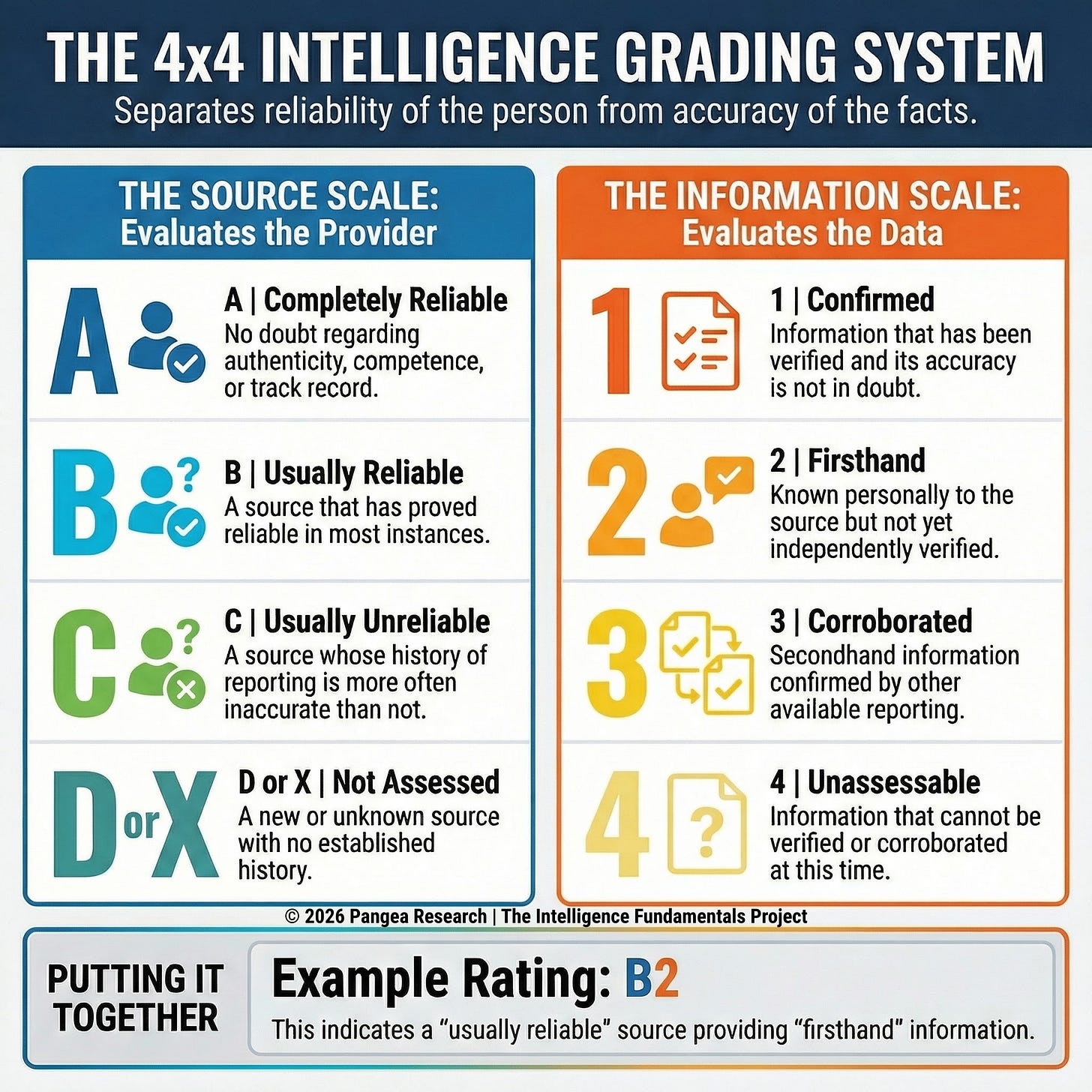

The Source Scale

An A rating describes a source with a perfect track record and no known grounds for doubt. The OSCE defines this as “completely reliable in all instances” (OSCE 2017). The Europol Regulation uses slightly different language: “where there is no doubt as to the authenticity, trustworthiness and competence of the source, or if the information is provided by a source which has proved to be reliable in all instances” (Council of the European Union 2016). The Europol version adds trustworthiness and competence as explicit criteria alongside track record, which gives the evaluator slightly more to work with when rating a source that’s new but clearly authoritative (a government database, for example, or an official registry).

B describes a source with a strong but imperfect history. The OSCE defines it as “usually reliable” (OSCE 2017); Europol says “a source which has in most instances proved to be reliable” (Council of the European Union 2016). C describes a source whose reporting has been unreliable more often than not. Europol’s wording is “a source which has in most instances proved to be unreliable” (Council of the European Union 2016).

The fourth category is the default for sources without an established track record. The OSCE labels it D, “reliability cannot be assessed” (OSCE 2017). Europol labels it X, which serves the same function but avoids implying a graded position below C (Council of the European Union 2016). A police analyst receiving a tip from a first-time caller and a corporate intelligence team evaluating a new trade contact in an unfamiliar market both land here: there’s no history to assess, so the rating says “we don’t know yet.”

The four-point source scale compresses the middle range into just two tiers: B and C. There’s no category for a source that falls between those positions. A contact who has provided accurate information roughly half the time (right in some areas, wrong in others, with no clear pattern) has to be pushed into either B or C, and the choice between those two labels carries more evaluative weight than the system makes transparent.

For a corporate intelligence team evaluating trade sources across international markets, this compression means that contacts with meaningfully different track records might receive the same rating. A vendor who has been accurate on six out of eight past reports and a vendor who has been accurate on four out of eight could both end up rated B if the analyst gives them the benefit of the doubt, or both rated C if the analyst doesn’t. A more granular system would separate those two vendors; the 4x4 forces them into the same bucket. The downstream reader seeing a B or C rating has no way to know which side of that judgment the evaluator landed on or how close the call was.

The Information Scale

A rating of 1 describes information that has been verified and is considered solid. The OSCE defines it as “accuracy not in doubt” (OSCE 2017); Europol uses “information the accuracy of which is not in doubt” (Council of the European Union 2016). A rating of 2, “known personally to the source but not known personally to the official passing it on,” is an access-based distinction: the source claims firsthand knowledge, but the reporting officer hasn’t verified it independently (OSCE 2017). A rating of 3, “not known personally to the source but corroborated by other available information,” describes secondhand information that has been checked against other reporting and found consistent (OSCE 2017). A rating of 4 is the catch-all for information that can’t currently be verified: “accuracy cannot be assessed or corroborated in any way (at this time)” (OSCE 2017).

The “(at this time)” qualifier on the 4 rating is a useful design feature. It reminds the evaluator and the reader that evaluation is provisional. Information rated 4 today might become verifiable tomorrow when a new source comes in, when a partner agency responds to a request, or when the situation on the ground changes enough to produce corroborating or contradicting evidence. A law enforcement analyst receiving a tip about criminal activity in a jurisdiction where they have no other sources might rate the information 4 today because they have no basis for checking it, but coordination with the relevant local agency could produce corroboration within days.

A private investigator working a fraud case might receive uncorroborated information from an anonymous caller; rated 4 on Monday, that same information could move to a 3 by Thursday if financial records confirm the pattern the caller described. The rating captures the analyst’s current state of knowledge, and the “(at this time)” tag makes explicit that the current state is expected to change.

Mixed Variables in the Information Scale

The 1-through-4 information scale folds together several different questions that, strictly speaking, measure different things. A rating of 1 is about accuracy: the information has been verified. A rating of 2 is about the source’s proximity to the event: the source witnessed or experienced it firsthand, but the officer passing it on hasn’t confirmed it. A rating of 3 mixes proximity and corroboration: the source didn’t witness the event personally, but other reporting supports it. A rating of 4 is about the evaluator’s current ability to check: the information can’t be verified yet. Accuracy, proximity, and corroboration are three separate analytical questions, and collapsing them into a single scale creates situations where the rating obscures as much as it reveals (Block 2021).

A piece of information might come from a source who witnessed something firsthand (which would suggest a 2) but also be corroborated by two other independent reports (which would suggest a 3, or arguably a 1). The analyst has to pick one number, and the scale doesn’t have a clean way to express “firsthand and corroborated.” The same problem runs in the other direction: a 3 rating tells the reader that the information is secondhand but corroborated, while a 2 rating tells the reader the information is firsthand but uncorroborated. Which is stronger? The system doesn’t say, and reasonable analysts will disagree.

A due diligence investigator who receives a secondhand tip about a subject’s financial history, then confirms it through public records, has a corroborated piece of information that technically rates a 3 but might be more reliable than a firsthand observation (rated 2) from a source who saw something once and could have misunderstood it. The 4x4 rating doesn’t capture that distinction; the analyst’s narrative notes have to do the work the number can’t.

Gaps and Limitations

The compressed information scale has no category for information the analyst believes is wrong. The Admiralty Code includes “doubtful” (information that’s possible but not logical and has nothing to compare against) and “improbable” (information that contradicts other evidence). The 5x5x5 includes “suspected to be false.” The 4x4 has neither. Information that actively contradicts everything else the analyst knows still gets rated on the 1-through-4 scale, which means the analyst has to flag the contradiction in a narrative note or verbal briefing rather than through the grading system itself.

For organizations operating in high-volume environments where ratings are the primary triage mechanism, contradicted information and merely unverifiable information sit in the same bucket. The analyst knows the difference, but the rating doesn’t show it. A corporate due diligence team processing dozens of source reports on a potential acquisition target might rate a report as 4 (”cannot be assessed”) when the report actually contradicts three other verified sources; the 4 rating tells downstream analysts the information is unverified, but it doesn’t tell them the information is actively suspect.

The 4x4 itself contains no handling dimension. The source and information ratings travel alone, with no built-in code for specifying who can see the intelligence, how it should be stored, or what restrictions apply to sharing it. Organizations that use the 4x4 generally manage dissemination through separate mechanisms. Europol, for example, pairs its 4x4 ratings with a set of handling codes (H0 through H3) that specify whether the intelligence can be used in judicial proceedings, whether it requires the provider’s permission to disseminate, and whether additional restrictions apply (Council of the European Union 2016). But those handling codes aren’t part of the 4x4 rating itself; they’re a separate layer.

For organizations with simple information-sharing architectures (a single agency operating domestically under one legal authority and one classification system) the separation may not cause problems. For organizations operating across jurisdictional lines or sharing intelligence with partners under different legal frameworks, handling restrictions can get separated from the intelligence they apply to when information passes through multiple organizations or enters shared databases where the original evaluator’s notes don’t follow.

A regional law enforcement fusion center receiving intelligence from both a federal agency and a municipal department might store both in the same database with 4x4 ratings attached, but the federal report carries dissemination restrictions that the municipal report doesn’t; without a handling code embedded in the rating itself, those restrictions depend entirely on metadata, cover sheets, or institutional memory to reach the analyst who pulls the report six months later.

When the 4x4 Fits and When It Doesn’t

Less granularity in the rating means more reliance on narrative context, verbal briefings, and the downstream analyst’s own judgment to fill in what the numbers and letters don’t express. For environments where analysts are processing high volumes of reporting under time pressure, or where the personnel making evaluations have limited analytical training, a smaller matrix reduces cognitive load and error rates. A police service building an intelligence function from scratch, with officers who have never graded information before, will get more consistent results from a four-point scale than from a six-point one. The 4x4 lowers the barrier to entry, and consistent application of a simpler system is more valuable than inconsistent application of a precise one.

The compression starts to cost you when the work requires fine distinctions. If your organization routinely handles information that needs to be flagged as contradicted or suspected false (rather than just unverifiable), the 4x4 doesn’t give you a rating for that. If your analysts frequently encounter sources with ambiguous track records that fall squarely between B and C, they’re making forced calls that the system doesn’t record. If your intelligence products cross organizational or jurisdictional boundaries where handling restrictions matter, you’ll need a separate dissemination protocol because the 4x4 doesn’t include one. None of these gaps are fatal; they’re managed every day through narrative supplements, verbal briefings, and organizational policies. But they do mean the 4x4 works best when it’s supported by those additional layers, and worst when the rating is expected to carry all the evaluative weight on its own.

References

Block, Ludo. 2021. “Law Enforcement Use of Information Grading Systems.” Blockint. https://www.blockint.nl/methods/law-enforcement-use-of-information-grading-systems/.

Council of the European Union. 2016. Regulation (EU) 2016/794 of the European Parliament and of the Council of 11 May 2016 on the European Union Agency for Law Enforcement Cooperation (Europol). Article 29.

OSCE. 2017. Guidebook on Intelligence-Led Policing. Organization for Security and Co-operation in Europe.

UNODC. 2011. Criminal Intelligence: Manual for Analysts. Vienna: United Nations Office on Drugs and Crime.

Originally published on Substack

View on Substack →