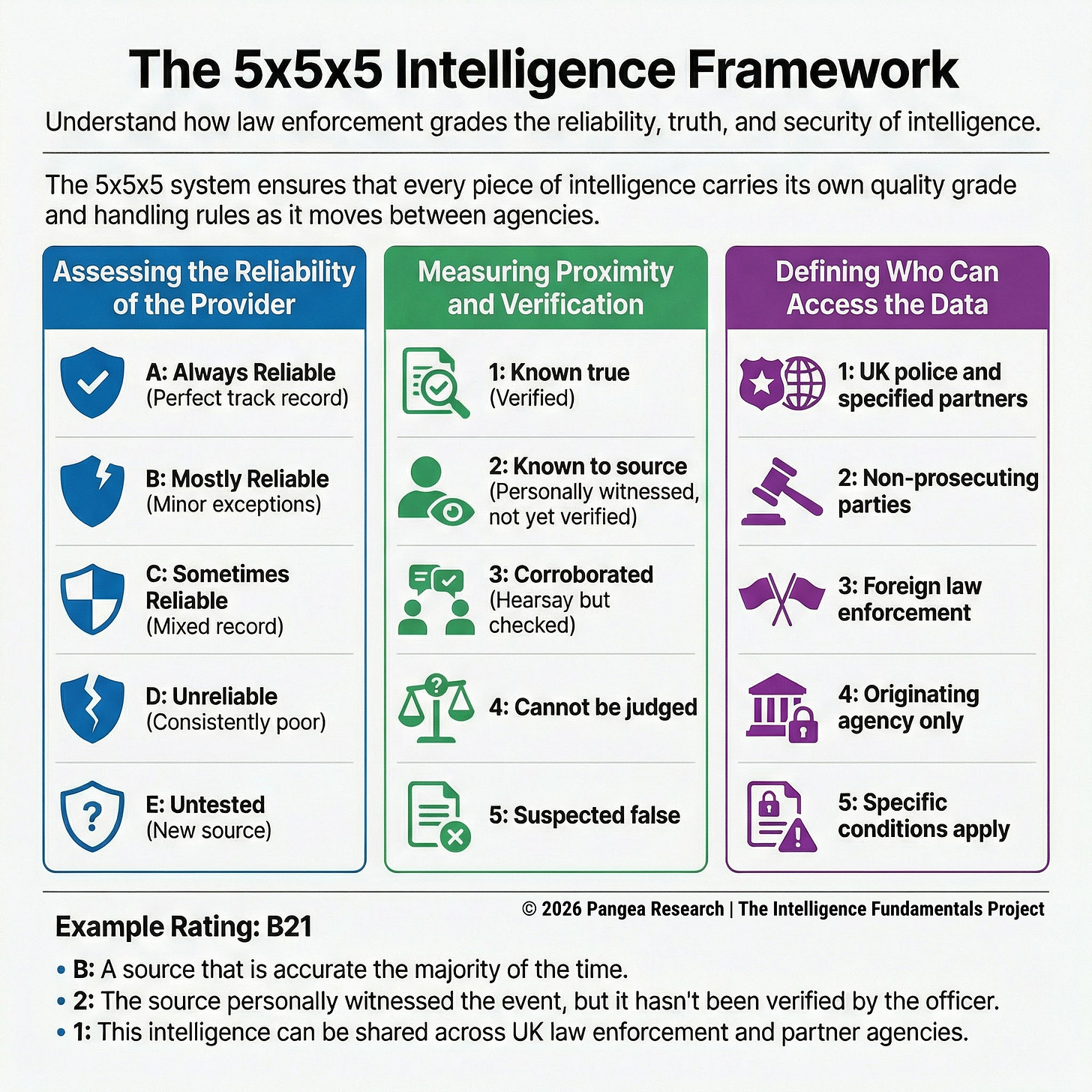

UK law enforcement grades intelligence using a framework called the 5x5x5, which evaluates three things at once: the source that provided the information, the information itself, and the rules governing who can see it and what they can do with it. That third dimension (the handling code) is what makes the 5x5x5 distinctive. In police work, the same report from an informant might be suitable for open sharing with a partner agency, restricted to a named investigation team, usable only after removing details that would identify the source, or so sensitive that it requires specific storage conditions and access controls.

Those restrictions matter as much as the quality grade, because sharing intelligence improperly can expose a source, compromise an active operation, or violate legal protections governing how law enforcement handles personal data. The 5x5x5 attaches quality grades and handling rules at the point of entry so they travel together as the intelligence moves from person to person and system to system.

UK police forces have since replaced the 5x5x5 with a simplified 3x5x2 model, a transition that began around 2016 and was formalized in the College of Policing’s updated Authorised Professional Practice (College of Policing 2023). The 3x5x2 compresses the source scale from five levels to three (reliable, untested, not reliable), retains the five-level information scale, and reduces the handling codes to two (permitted, or permitted with conditions). The simplification was designed to make grading faster and more consistent at the point of collection, where patrol officers and first responders often had to apply the codes under time pressure.

Practitioners still need to understand the 5x5x5 for three reasons: older intelligence products still in police databases carry 5x5x5 ratings, international law enforcement partners and private sector organizations continue to use it, and the 5x5x5 remains the most widely referenced police evaluation system in published guidance outside the UK (OSCE 2017).

Origin and Institutional Context

The 5x5x5 became standard across UK policing through the National Intelligence Model (NIM), the UK’s framework for professionalizing intelligence-led policing. One of the NIM’s minimum standards was consistent use of the 5x5x5 for evaluating and submitting intelligence across all forces (ACPO 2005). That institutional mandate gave the system its reach: every police force in England and Wales, many police services in Scotland and Northern Ireland, and partner agencies that share intelligence with UK law enforcement all adopted it. The UK’s National Police Chiefs’ Council guidance on open-source investigation and research extends this requirement to digital intelligence, requiring that OSINT products be evaluated and submitted into force intelligence management systems using a 5x5x5 in most cases (NPCC 2023).

Any practitioner working with or alongside UK police will encounter this format. A private investigator supporting a criminal case submits findings to the force intelligence unit; those findings need a 5x5x5. A corporate fraud team referring a matter to law enforcement packages its intelligence in a format the receiving force can ingest; that format is the 5x5x5 (or its 3x5x2 successor). A foreign law enforcement partner on a joint investigation receives UK intelligence products graded this way and needs to understand what the codes mean.

The Source Scale

The source scale runs from A through E. A (”always reliable”) describes a source with a perfect track record across all known reporting (OSCE 2017). This grade applies rarely; a biometric system with a verified performance record or a technical sensor with documented accuracy might qualify, but most human sources won’t. B (”mostly reliable”) describes a source that has been accurate the great majority of the time, with only minor or infrequent exceptions (OSCE 2017). In a corporate due diligence context, a trade database that consistently returns accurate filings but occasionally has a lag on recent updates would sit here.

C (”sometimes reliable”) describes a source with a genuinely mixed record: right some of the time, wrong some of the time, with no clear pattern that would let you predict which (OSCE 2017). D (”unreliable”) describes a source whose reporting has been consistently poor. E (”untested source”) is the default for sources without an established history (OSCE 2017).

The E grade carries no negative connotation. A police officer receiving a call from a member of the public who has never provided information before assigns an E. A corporate compliance team receiving a whistleblower tip through an anonymous hotline assigns the same grade. In both cases, the grade signals “we don’t yet know how reliable this source is” rather than “this source is unreliable.”

The five-point scale gives the middle range two tiers: C (”sometimes reliable”) and D (”unreliable”). A police informant who provides good intelligence roughly half the time falls into C, while one whose reporting has been consistently wrong falls into D. That separation matters for operational decisions. An analyst deciding how much weight to give a new report from a C-rated source knows the source has a mixed record and will look for corroboration before acting. A D-rated source’s reporting might still be collected and filed, but it’s unlikely to drive an operational decision without substantial independent support.

The Information Scale

The 5x5x5’s information scale asks: “how was this information obtained and how has it been verified?” The focus is on proximity to the event and the method of corroboration. Instead of asking an analyst to estimate whether information is “probably true” or “possibly true,” the 5x5x5 asks whether the source was there, whether someone else has checked, and how many steps removed from the original event the report sits.

A rating of 1 (”known to be true without reservation”) describes information the evaluator considers verified beyond reasonable question (OSCE 2017). Independent corroboration, logical consistency, and alignment with other known facts are all required. A due diligence analyst who has confirmed a subject’s corporate directorship through three independent registries (Companies House filings, the company’s own annual report, and a commercial database) is looking at 1-rated information.

A rating of 2 (”known personally to the source but not to the person reporting”) describes information the source witnessed, experienced, or obtained firsthand, but which the person passing it along has not independently verified (OSCE 2017). This grade separates whether the source had direct access from whether the reporting officer has confirmed what the source says. A police handler whose informant claims to have personally witnessed a drug transaction records 2-rated information: the source says they saw it, and the handler has no independent basis to confirm or deny that claim. A corporate security manager whose employee reports personally witnessing a colleague removing proprietary files is in the same position; the employee was there, but the manager hasn’t confirmed the event through access logs or video footage yet.

A rating of 3 (”not known personally to the source but corroborated”) describes secondhand information that has been checked against other reporting (OSCE 2017). The source didn’t witness the event; they’re relaying something they heard, read, or were told. Someone has checked it against other available information and found it consistent. A private investigator whose subject’s former business partner says the subject was fired from a previous job (hearsay) corroborates that claim by confirming the employment gap through LinkedIn history and a reference check; that’s 3-rated information.

A rating of 4 (”cannot be judged”) is the default when neither the proximity of the source to the event nor the corroboration status can be determined (OSCE 2017). A rating of 5 (”suspected to be false”) means the evaluator has affirmatively concluded the information is wrong (OSCE 2017). This is a stronger claim than “unverifiable”; it means the evaluator has positive grounds for believing the information is incorrect, whether because it contradicts verified reporting, because it fails logical consistency checks, or because other evidence directly refutes it.

The focus on proximity and verification method reflects what law enforcement needs from an evaluation system. A detective deciding whether to authorize a search based on informant intelligence needs to know whether the informant claims to have seen contraband firsthand or is relaying something they heard from a third party. That distinction directly affects whether the information can support a warrant application, bear weight in a prosecution, or justify an operational decision.

An insurance fraud investigator faces a parallel question: did the claimant’s neighbor personally witness the claimant engaging in physical activity inconsistent with their injury claim, or did the neighbor hear about it from someone else? The proximity of the source to the event determines how much weight the information can carry and what the investigator can do with it.

The Handling Dimension

The handling code specifies who can receive the intelligence, how it must be stored, and what conditions apply to its use. The original 5x5x5 handling codes governed dissemination across five levels: intelligence that could be shared with UK law enforcement and other specified agencies; intelligence that could be shared with UK non-prosecuting parties such as local authorities or regulatory bodies; intelligence that could be shared with foreign law enforcement agencies; intelligence restricted to the originating force or agency only; and intelligence that could be shared subject to specific conditions set by the originator (ACPO 2010).

Each code carried operational consequences. Intelligence shared under code 1 could move freely across police forces and agencies like HMRC or the former Serious Organised Crime Agency. Intelligence under code 4 stayed within the originating force, and any change to that restriction required a review.

Those restrictions exist because intelligence mishandled at the dissemination stage can burn a source, compromise an investigation, or create legal exposure. If a police force shares informant intelligence with a partner agency that doesn’t properly sanitize it (remove details that would identify the source), the informant’s safety is at risk. If intelligence subject to legal privilege gets disclosed beyond the authorized recipients, a prosecution can be challenged. If personal data collected under UK data protection law gets shared with a foreign agency that doesn’t meet equivalent data protection standards, the originating force faces regulatory liability.

A corporate investigations team that develops sensitive intelligence about internal fraud faces a version of the same problem. The findings might be suitable for sharing with the legal department, restricted from the subject’s direct supervisor to avoid tipping off the investigation, appropriate for a board-level briefing in summary form, and potentially subject to legal privilege that limits further disclosure.

A private investigator working a domestic case might have information appropriate for the client’s solicitor but not for the client directly, particularly if the information involves third parties whose privacy is legally protected. The 5x5x5 builds handling restrictions into the evaluation itself; organizations that don’t use the 5x5x5 need to manage equivalent restrictions through separate policies, handling caveats, or covering memos attached to each report.

Gaps and Limitations

The 5x5x5’s information scale measures proximity and verification method rather than probability. That’s a strength for operational police work, where a detective needs to know whether the source was there or is relaying hearsay. It’s a limitation when an analyst needs to express a probability judgment about information that doesn’t fit neatly into the proximity categories. A piece of open-source information that’s logically plausible and consistent with other reporting, but that nobody witnessed firsthand and nobody has independently corroborated, doesn’t map cleanly onto the 1-through-5 scale. It’s not “known to be true” (1), nobody “knew it personally” (2), it hasn’t been formally “corroborated” (3), and it’s not “suspected to be false” (5), so it defaults to 4 (”cannot be judged”) even though the analyst may have a reasonable basis for believing it’s accurate. The Admiralty Code’s probability-based scale (from “confirmed” down through “probably true,” “possibly true,” “doubtful,” and “improbable”) would give that analyst more room to express their judgment.

The source scale compresses the middle range into two tiers (C and D), which means sources with meaningfully different track records can end up with the same grade. An informant who’s been right 60% of the time and one who’s been right 30% of the time both land somewhere in the C-to-D range, and the single letter doesn’t express the distance between them. The Admiralty Code spreads the equivalent range across three tiers (C, D, and E), giving evaluators more room in the space where most real-world sources actually sit.

The handling dimension, which is the 5x5x5’s most distinctive contribution, also creates translation problems when intelligence crosses organizational boundaries. The five handling codes (or the 3x5x2’s two) are specific to UK policing and don’t have equivalents in the Admiralty Code, the 4x4, or most other evaluation systems. A UK police force sending intelligence to a military partner, a foreign law enforcement agency, or a private security firm needs to translate or supplement the handling codes, because the receiving organization’s system won’t have a place to record them. The grade letters and numbers look similar across systems (A2, B3, C1), and that surface resemblance makes it easy to assume they mean the same thing. A 5x5x5 rating of B2 (mostly reliable source, information known personally to the source but not verified by the reporting officer) measures something different from an Admiralty Code B2 (usually reliable source, probably true information). The letters match; the underlying scales don’t.

The 5x5x5

UK law enforcement grades intelligence using a framework called the 5x5x5, which evaluates three things at once: the source that provided the information, the information itself, and the rules governing who can see it and what they can do with it. That third dimension (the handling code) is what makes the 5x5x5 distinctive. In police work, the same report from an informant might be suitable for open sharing with a partner agency, restricted to a named investigation team, usable only after removing details that would identify the source, or so sensitive that it requires specific storage conditions and access controls.

Those restrictions matter as much as the quality grade, because sharing intelligence improperly can expose a source, compromise an active operation, or violate legal protections governing how law enforcement handles personal data. The 5x5x5 attaches quality grades and handling rules at the point of entry so they travel together as the intelligence moves from person to person and system to system.

UK police forces have since replaced the 5x5x5 with a simplified 3x5x2 model, a transition that began around 2016 and was formalized in the College of Policing’s updated Authorised Professional Practice (College of Policing 2023). The 3x5x2 compresses the source scale from five levels to three (reliable, untested, not reliable), retains the five-level information scale, and reduces the handling codes to two (permitted, or permitted with conditions). The simplification was designed to make grading faster and more consistent at the point of collection, where patrol officers and first responders often had to apply the codes under time pressure.

Practitioners still need to understand the 5x5x5 for three reasons: older intelligence products still in police databases carry 5x5x5 ratings, international law enforcement partners and private sector organizations continue to use it, and the 5x5x5 remains the most widely referenced police evaluation system in published guidance outside the UK (OSCE 2017).

Origin and Institutional Context

The 5x5x5 became standard across UK policing through the National Intelligence Model (NIM), the UK’s framework for professionalizing intelligence-led policing. One of the NIM’s minimum standards was consistent use of the 5x5x5 for evaluating and submitting intelligence across all forces (ACPO 2005). That institutional mandate gave the system its reach: every police force in England and Wales, many police services in Scotland and Northern Ireland, and partner agencies that share intelligence with UK law enforcement all adopted it. The UK’s National Police Chiefs’ Council guidance on open-source investigation and research extends this requirement to digital intelligence, requiring that OSINT products be evaluated and submitted into force intelligence management systems using a 5x5x5 in most cases (NPCC 2023).

Any practitioner working with or alongside UK police will encounter this format. A private investigator supporting a criminal case submits findings to the force intelligence unit; those findings need a 5x5x5. A corporate fraud team referring a matter to law enforcement packages its intelligence in a format the receiving force can ingest; that format is the 5x5x5 (or its 3x5x2 successor). A foreign law enforcement partner on a joint investigation receives UK intelligence products graded this way and needs to understand what the codes mean.

The Source Scale

The source scale runs from A through E. A (”always reliable”) describes a source with a perfect track record across all known reporting (OSCE 2017). This grade applies rarely; a biometric system with a verified performance record or a technical sensor with documented accuracy might qualify, but most human sources won’t. B (”mostly reliable”) describes a source that has been accurate the great majority of the time, with only minor or infrequent exceptions (OSCE 2017). In a corporate due diligence context, a trade database that consistently returns accurate filings but occasionally has a lag on recent updates would sit here.

C (”sometimes reliable”) describes a source with a genuinely mixed record: right some of the time, wrong some of the time, with no clear pattern that would let you predict which (OSCE 2017). D (”unreliable”) describes a source whose reporting has been consistently poor. E (”untested source”) is the default for sources without an established history (OSCE 2017).

The E grade carries no negative connotation. A police officer receiving a call from a member of the public who has never provided information before assigns an E. A corporate compliance team receiving a whistleblower tip through an anonymous hotline assigns the same grade. In both cases, the grade signals “we don’t yet know how reliable this source is” rather than “this source is unreliable.”

The five-point scale gives the middle range two tiers: C (”sometimes reliable”) and D (”unreliable”). A police informant who provides good intelligence roughly half the time falls into C, while one whose reporting has been consistently wrong falls into D. That separation matters for operational decisions. An analyst deciding how much weight to give a new report from a C-rated source knows the source has a mixed record and will look for corroboration before acting. A D-rated source’s reporting might still be collected and filed, but it’s unlikely to drive an operational decision without substantial independent support.

The Information Scale

The 5x5x5’s information scale asks: “how was this information obtained and how has it been verified?” The focus is on proximity to the event and the method of corroboration. Instead of asking an analyst to estimate whether information is “probably true” or “possibly true,” the 5x5x5 asks whether the source was there, whether someone else has checked, and how many steps removed from the original event the report sits.

A rating of 1 (”known to be true without reservation”) describes information the evaluator considers verified beyond reasonable question (OSCE 2017). Independent corroboration, logical consistency, and alignment with other known facts are all required. A due diligence analyst who has confirmed a subject’s corporate directorship through three independent registries (Companies House filings, the company’s own annual report, and a commercial database) is looking at 1-rated information.

A rating of 2 (”known personally to the source but not to the person reporting”) describes information the source witnessed, experienced, or obtained firsthand, but which the person passing it along has not independently verified (OSCE 2017). This grade separates whether the source had direct access from whether the reporting officer has confirmed what the source says. A police handler whose informant claims to have personally witnessed a drug transaction records 2-rated information: the source says they saw it, and the handler has no independent basis to confirm or deny that claim. A corporate security manager whose employee reports personally witnessing a colleague removing proprietary files is in the same position; the employee was there, but the manager hasn’t confirmed the event through access logs or video footage yet.

A rating of 3 (”not known personally to the source but corroborated”) describes secondhand information that has been checked against other reporting (OSCE 2017). The source didn’t witness the event; they’re relaying something they heard, read, or were told. Someone has checked it against other available information and found it consistent. A private investigator whose subject’s former business partner says the subject was fired from a previous job (hearsay) corroborates that claim by confirming the employment gap through LinkedIn history and a reference check; that’s 3-rated information.

A rating of 4 (”cannot be judged”) is the default when neither the proximity of the source to the event nor the corroboration status can be determined (OSCE 2017). A rating of 5 (”suspected to be false”) means the evaluator has affirmatively concluded the information is wrong (OSCE 2017). This is a stronger claim than “unverifiable”; it means the evaluator has positive grounds for believing the information is incorrect, whether because it contradicts verified reporting, because it fails logical consistency checks, or because other evidence directly refutes it.

The focus on proximity and verification method reflects what law enforcement needs from an evaluation system. A detective deciding whether to authorize a search based on informant intelligence needs to know whether the informant claims to have seen contraband firsthand or is relaying something they heard from a third party. That distinction directly affects whether the information can support a warrant application, bear weight in a prosecution, or justify an operational decision.

An insurance fraud investigator faces a parallel question: did the claimant’s neighbor personally witness the claimant engaging in physical activity inconsistent with their injury claim, or did the neighbor hear about it from someone else? The proximity of the source to the event determines how much weight the information can carry and what the investigator can do with it.

The Handling Dimension

The handling code specifies who can receive the intelligence, how it must be stored, and what conditions apply to its use. The original 5x5x5 handling codes governed dissemination across five levels: intelligence that could be shared with UK law enforcement and other specified agencies; intelligence that could be shared with UK non-prosecuting parties such as local authorities or regulatory bodies; intelligence that could be shared with foreign law enforcement agencies; intelligence restricted to the originating force or agency only; and intelligence that could be shared subject to specific conditions set by the originator (ACPO 2010).

Each code carried operational consequences. Intelligence shared under code 1 could move freely across police forces and agencies like HMRC or the former Serious Organised Crime Agency. Intelligence under code 4 stayed within the originating force, and any change to that restriction required a review.

Those restrictions exist because intelligence mishandled at the dissemination stage can burn a source, compromise an investigation, or create legal exposure. If a police force shares informant intelligence with a partner agency that doesn’t properly sanitize it (remove details that would identify the source), the informant’s safety is at risk. If intelligence subject to legal privilege gets disclosed beyond the authorized recipients, a prosecution can be challenged. If personal data collected under UK data protection law gets shared with a foreign agency that doesn’t meet equivalent data protection standards, the originating force faces regulatory liability.

A corporate investigations team that develops sensitive intelligence about internal fraud faces a version of the same problem. The findings might be suitable for sharing with the legal department, restricted from the subject’s direct supervisor to avoid tipping off the investigation, appropriate for a board-level briefing in summary form, and potentially subject to legal privilege that limits further disclosure.

A private investigator working a domestic case might have information appropriate for the client’s solicitor but not for the client directly, particularly if the information involves third parties whose privacy is legally protected. The 5x5x5 builds handling restrictions into the evaluation itself; organizations that don’t use the 5x5x5 need to manage equivalent restrictions through separate policies, handling caveats, or covering memos attached to each report.

Gaps and Limitations

The 5x5x5’s information scale measures proximity and verification method rather than probability. That’s a strength for operational police work, where a detective needs to know whether the source was there or is relaying hearsay. It’s a limitation when an analyst needs to express a probability judgment about information that doesn’t fit neatly into the proximity categories. A piece of open-source information that’s logically plausible and consistent with other reporting, but that nobody witnessed firsthand and nobody has independently corroborated, doesn’t map cleanly onto the 1-through-5 scale. It’s not “known to be true” (1), nobody “knew it personally” (2), it hasn’t been formally “corroborated” (3), and it’s not “suspected to be false” (5), so it defaults to 4 (”cannot be judged”) even though the analyst may have a reasonable basis for believing it’s accurate. The Admiralty Code’s probability-based scale (from “confirmed” down through “probably true,” “possibly true,” “doubtful,” and “improbable”) would give that analyst more room to express their judgment.

The source scale compresses the middle range into two tiers (C and D), which means sources with meaningfully different track records can end up with the same grade. An informant who’s been right 60% of the time and one who’s been right 30% of the time both land somewhere in the C-to-D range, and the single letter doesn’t express the distance between them. The Admiralty Code spreads the equivalent range across three tiers (C, D, and E), giving evaluators more room in the space where most real-world sources actually sit.

The handling dimension, which is the 5x5x5’s most distinctive contribution, also creates translation problems when intelligence crosses organizational boundaries. The five handling codes (or the 3x5x2’s two) are specific to UK policing and don’t have equivalents in the Admiralty Code, the 4x4, or most other evaluation systems. A UK police force sending intelligence to a military partner, a foreign law enforcement agency, or a private security firm needs to translate or supplement the handling codes, because the receiving organization’s system won’t have a place to record them. The grade letters and numbers look similar across systems (A2, B3, C1), and that surface resemblance makes it easy to assume they mean the same thing. A 5x5x5 rating of B2 (mostly reliable source, information known personally to the source but not verified by the reporting officer) measures something different from an Admiralty Code B2 (usually reliable source, probably true information). The letters match; the underlying scales don’t.

References

ACPO. 2005. Guidance on the National Intelligence Model. Wyboston: National Centre for Policing Excellence (Centrex).

ACPO. 2010. Guidance on the Management of Police Information. 2nd ed. National Policing Improvement Agency.

College of Policing. 2023. “Intelligence Report.” Authorised Professional Practice. College of Policing.

NPCC. 2023. Published NPCC Guidance: OSINT Research. National Police Chiefs’ Council.

OSCE. 2017. Guidebook on Intelligence-Led Policing. Organization for Security and Co-operation in Europe.

ACPO. 2005. Guidance on the National Intelligence Model. Wyboston: National Centre for Policing Excellence (Centrex).

ACPO. 2010. Guidance on the Management of Police Information. 2nd ed. National Policing Improvement Agency.

College of Policing. 2023. “Intelligence Report.” Authorised Professional Practice. College of Policing.

NPCC. 2023. Published NPCC Guidance: OSINT Research. National Police Chiefs’ Council.

OSCE. 2017. Guidebook on Intelligence-Led Policing. Organization for Security and Co-operation in Europe.

Originally published on Substack

View on Substack →