When a report arrives tagged B2, an analyst knows three things before reading a word: the source has a strong track record, the information is logically consistent with other reporting, and no one has independently confirmed it yet. That entire evaluation fits in two characters. The Admiralty Code (formally the NATO Intelligence Grading System, codified in AJP-2.1 under STANAG 2511) pairs a letter rating for the source's reliability with a number rating for the information's credibility. The letter reflects the source's history; the number reflects this specific report. B2, C4, F1, A5: each combination tells the reader something different about how far they can lean on what they're about to read. The system traces back to 1939, when the British Director of Naval Intelligence needed a way to evaluate the flood of reports arriving from ships, agents, and allied services without attaching lengthy narrative caveats to each one (McLachlan 1968). NATO adopted and standardized the framework, and it remains the most widely used source evaluation shorthand in Western intelligence, military operations, law enforcement fusion centers, and (increasingly) corporate intelligence shops.

The Source Reliability Scale

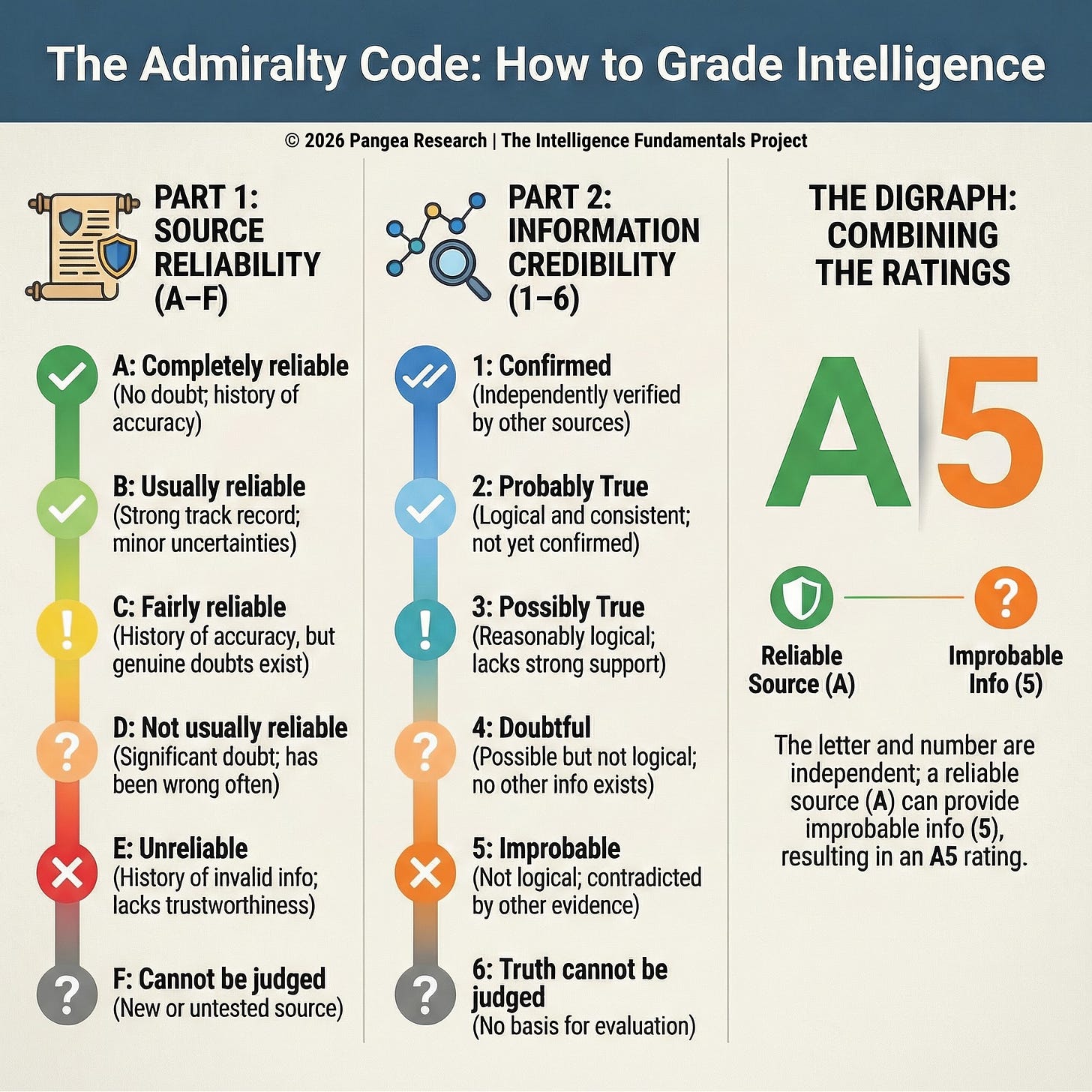

The reliability scale assigns a letter grade from A through F based on the source’s track record. The assessment is cumulative; it reflects performance over time across multiple reporting events. A source earns an A by demonstrating complete reliability across many reports, and they lose it the same way, through a pattern of degradation rather than a single miss.

An A rating (”completely reliable” in NATO terminology) means there is no doubt about the source’s authenticity, trustworthiness, or competency, and the source has a history of complete reliability (AJP-2.1 2016). Few sources reach this level. A human source earning an A has provided information repeatedly, across different topics and time periods, and that information has consistently checked out. A technical collection system earning an A has performed within specifications under varying conditions without producing significant errors. In corporate settings, an industry contact who has provided accurate competitive intelligence across dozens of interactions over several years, whose access has been verified, and whose information has never been contradicted by subsequent events would meet this standard.

B (”usually reliable”) acknowledges minor doubts but reflects a strong overall track record. Most established, productive sources end up here. The source has been valid most of the time, with occasional gaps, uncertainties, or minor inaccuracies that don’t undermine overall trust (AJP-2.1 2016). A law enforcement informant who has provided actionable intelligence on multiple occasions, with one or two reports that turned out to be inaccurate or exaggerated, fits this category. A media outlet with a strong editorial track record that has occasionally published corrections fits here as well.

C (”fairly reliable”) introduces genuine doubt about the source’s authenticity, trustworthiness, or competency, though the source has provided valid information in the past (AJP-2.1 2016). Many real-world sources land here: people who have some access and some track record but who have also been wrong enough times, or whose motivations are uncertain enough, that their reporting requires careful corroboration before anyone acts on it. A private investigator who occasionally uses a particular public records vendor and has found their data mostly accurate but has caught errors in roughly a quarter of cases would rate that vendor a C.

D (”not usually reliable”) means significant doubt exists, though the source has provided valid information in the past (AJP-2.1 2016). The distinction between C and D is one of degree: a C source has provided valid information enough times to maintain a baseline of cautious trust, while a D source has been wrong often enough that their reporting should be treated as a lead to verify rather than a finding to rely on. A corporate intelligence analyst who has a contact at a competitor that tends to exaggerate their knowledge, has been caught passing along rumors as firsthand observations, but did accurately report a product launch six months early would rate that contact a D.

E (”unreliable”) means the source lacks authenticity, trustworthiness, and competency and has a history of providing invalid information (AJP-2.1 2016). An E-rated source has been tested repeatedly and found wanting. Their reporting should be treated with extreme skepticism, though even an E-rated source can occasionally provide accurate information. That possibility is why the system keeps the information credibility rating independent from the source reliability rating; a broken clock is still right twice a day, and the credibility scale gives you a way to flag those moments without upgrading the source.

F (”reliability cannot be judged”) is the default for any source without an established track record (AJP-2.1 2016). Every new source starts here: a first-time informant calling in a tip, a newly discovered website, a foreign liaison partner whose reporting you haven’t had time to evaluate. A due diligence investigator receiving a referral to a new source in an unfamiliar market, a law enforcement analyst processing a walk-in report, or a corporate security team encountering a new open-source platform would all assign F until the source’s reporting history provides a basis for moving the rating up or down.

The Information Credibility Scale

The reliability scale asks about the source’s history. The credibility scale asks about the specific piece of information in front of you right now. It assigns a number from 1 through 6 based on the information’s plausibility, internal consistency, and corroboration status.

A rating of 1 (”confirmed by other sources”) is the highest credibility assessment: the information has been independently corroborated by at least one separate source, it is logical and internally consistent, and it aligns with other known information on the subject (AJP-2.1 2016). A rating of 1 requires actual independent confirmation from a separate collection path or reporting chain. A corporate intelligence report that a competitor is planning to exit a market segment reaches a 1 when separate, unrelated sources (a trade publication citing company insiders, a job posting pattern showing hiring freezes in that segment, and a supplier reporting canceled orders) all point to the same conclusion through different channels.

A rating of 2 (”probably true”) means the information hasn’t been independently confirmed but is logically sound and consistent with other reporting on the subject (AJP-2.1 2016). This is the most common rating for solid reporting from good sources where you haven’t yet secured corroboration. The difference between 1 and 2 comes down to whether someone else has independently verified the same conclusion; a 2 doesn’t reflect lower confidence in the source or the logic, only the absence of that independent check.

A 3 (”possibly true”) means the information has not been confirmed, is reasonably logical, and agrees with some but not all other information on the subject (AJP-2.1 2016). A 3 tells the reader that the information doesn’t conflict with what’s known but hasn’t been strongly supported either. It’s plausible and worth tracking, but not a sound basis for consequential decisions without additional support. A law enforcement analyst receiving a tip that a suspect has relocated to a new address, where the new address is in the same city the suspect was last seen in (consistent) but a recent utility records check shows no new accounts opened there (partially conflicting), would rate the tip a 3.

A 4 (”doubtful”) means the information is possible but not particularly logical, and there’s no other information on the subject to compare it against (AJP-2.1 2016). You can’t confirm it, you can’t rule it out, and the absence of corroborating or contradicting information leaves you without a basis for moving it in either direction. A police analyst receiving a tip about criminal activity in a sector they have no other coverage of would rate the information a 4: the claim isn’t impossible, but there’s nothing to check it against.

A 5 (”improbable”) means the information is not confirmed, not logical, and actively contradicted by other reporting (AJP-2.1 2016). Something about the report doesn’t hold together, and other evidence points in a different direction. A 5 is a strong reason for skepticism, though independent sources can all be wrong in the same direction, so a 5 doesn’t close the book on the information permanently. A corporate security team receiving an anonymous tip that a senior executive is leaking proprietary data, when the executive has no access to the systems the tip describes and three separate internal audits show no anomalous data transfers, would rate the tip a 5.

A 6 (”truth cannot be judged”) means there’s no basis for evaluating the information at all (AJP-2.1 2016). Like F on the reliability scale, a 6 reflects the analyst’s knowledge gap, not the information’s quality. The analyst simply doesn’t have enough context, corroboration, or contradicting evidence to place the information anywhere on the credibility spectrum.

The Digraph: How the Ratings Combine

The two scales combine into a single digraph that travels with the information through every stage of processing. The letter comes first, the number second: B2 means a usually reliable source providing probably true information. E1 means an unreliable source whose specific piece of information has been independently confirmed. F6 means an untested source providing unverifiable information. These combinations capture the full range of real-world situations, including the counterintuitive ones.

The two ratings are independent of each other, and they should stay that way. A completely reliable source can provide improbable information (A5), and an unreliable source can provide confirmed information (E1) (JDP 2-00 2011). If a B-rated source provides information, the analyst still has to evaluate that specific information on its own terms (logical consistency, corroboration status, plausibility) and assign a credibility rating based on what the evidence shows. A source’s track record tells you how often their reporting has been accurate in the past; it tells you nothing about whether this particular report is accurate today. The first article in this series covered this failure in detail: when an analyst lets a source’s reliability rating pull the credibility rating in its direction, a single source can contaminate an entire assessment by lending borrowed credibility to unverified information.

F6 is the single most common digraph in OSINT work. It means the analyst has no basis for judging the source’s reliability and no basis for judging the information’s credibility. The information may still be accurate, actionable, and valuable. A significant proportion of incoming OSINT reporting carries F6 ratings because it arrives from sources with no established track record and can’t be immediately corroborated. The rating tells you to evaluate the information carefully, knowing that you have no track record to lean on and no independent confirmation to anchor your judgment. It’s a starting point for analysis, and in high-volume OSINT environments, it’s the starting point for the majority of the material you’ll work with (JDP 2-00 2011).

Limitations and Where the System Struggles

The Admiralty Code was designed for environments where sources are identifiable and develop track records over time: human informants, liaison services, technical collection platforms with known performance histories. It maps less cleanly onto environments where sources are anonymous, ephemeral, or too numerous to track individually. An analyst monitoring a social media platform during a developing crisis encounters hundreds of individual sources in a single day, most of whom will never post again on the same topic. Assigning each one an F rating is technically correct but provides no differentiation between a long-established account with a verified identity and a brand-new anonymous account created that morning. Both receive F, even though the analyst’s working assessment of their reliability would differ significantly.

The system also lacks fields for several dimensions that modern analytical environments require. There’s no way to capture the source’s potential motivation, which frameworks like the 5 Pillars of Verification (a structured method for evaluating source provenance, motivation, and original context) treat as a core evaluation criterion. There’s no handling code specifying how the information can be shared, which the UK law enforcement 5x5x5 system addresses with its third dimension. There’s no timeliness indicator, and in OSINT work, a report from three hours ago and a report from three months ago carry very different analytical weight. These boundaries reflect the system’s original purpose: evaluating intelligence reports flowing through institutional channels with established security protocols. Practitioners working outside that context (in corporate intelligence, private investigation, open-source analysis, or cross-sector collaboration) should understand what the system captures and what additional evaluation criteria they may need to layer on top of it. The system gives you reliability and credibility in a portable, universally recognized shorthand; it’s your job to track everything else.

References

McLachlan, Donald. 1968. Room 39: Naval Intelligence in Action 1939-45. London: Weidenfeld and Nicolson.

North Atlantic Treaty Organization. 2016. AJP-2.1, Edition B: Allied Joint Doctrine for Intelligence Procedures. NATO Standardization Office.

UK Ministry of Defence. 2011. Joint Doctrine Publication 2-00: Understanding and Intelligence Support to Joint Operations. 4th Edition.

Originally published on Substack

View on Substack →